Rain, snow, icy roads… They can all result in dangerous road conditions. How can car technologies help provide real-time weather data and proactive warnings for drivers? During the SARWS project, we already proved that classical sensors (temperature and humidity) could enhance road weather services and safety with local, real-time information. How can we take these initial findings to the next level with AI and deep learning? Continue reading this blog to discover the opportunities of smart sensors!

Setting the SARWS weather scene

If you haven’t read our perspective on the SARWS project, here’s a short recap to set the scene: the SARWS project was set up to evaluate how car technologies can provide real-time weather data and proactive safety warnings for drivers. By equipping vehicles with various types of external sensors, we created a fleet of mobile local weather stations. Together with a fanless mini pc, these sensors formed the In Car Smart Sensor Node (ICSSN), capturing the raw data and then centralizing, (pre-)processing and aggregating it before distributing it further to the cloud. In this first testing stage, we focused on the more classical sensors, like temperature and humidity, but didn’t really include the camera yet. So, what did we do with the camera? We created a smart sensor with it called ‘WeathercAIm’.

WeathercAIm?

So, what is this WeathercAIm and what does it do? WeathercAIm is a smart sensor inside the vehicle that combines a camera, a sort of dashcam functioning as the eyes of the vehicle, connected to the mini pc, the brain, with edge artificial intelligence (AI) processing running on it.

It has AI in it? Surely you must be able to ask it questions about the current weather or generate some pretty weather pictures, right? Or maybe even generate the weather you desire? Wouldn’t that be cool?! With all the fuss, not to say hype, about generative AI, you might overlook the other types of AI. But, if you’re a loyal reader of our insights, you know that there are many more types of AI, which have already proven their merits in various industries and applications that are also very cool.

With WeathercAIm we didn’t want to generate, but “see” the actual current weather based on the pictures taken by the camera with AI. More precisely, for SARWS we wanted to recognize if there’s precipitation, which precipitation type (rain, snow, melting snow, hail), and visibility (fog). This is in principle a classification task.

Besides the main weather classification goal, we also had to manage constraints and requirements related to privacy, GDPR and data connection bandwidth and usage to a minimum. As you can imagine, sending tons of pictures into the cloud via a mobile connection would be quite a challenge. That’s why we choose to process the data locally on the edge, for example on the mini pc. This also influenced our choices and approach for the AI model, as can’t run the super big AI models on the edge as you can in the cloud.

Supervised learning approach for multiclass classification

Since we’re always in for a challenge, we tackled the in-principle multi-classification task problem with a single AI, machine learning (ML) classifier. For this, we decided to make a classifier with multiple classes, one for ‘no precipitation’, one for each of the precipitation types, and one for visibility.

⚠ Dry technical text road ahead, with possibly some slippery patches. Skip to the next paragraph if desired.

A supervised learning approach for multiclass classification was used for the ML model. We opted for a classifier based on a convolutional neural network (CNN), a deep learning approach, that had already proven its merits in the computer vision field. More precisely, we used a CNN with a ResNet50v2 backbone and a dense layer as the classification head. The CNN is trained via transfer learning with fine-tuning and the backbone network is pre-trained on ImageNet, and the CNN model is implemented in Keras/TensorFlow. If you want even more nitty-gritty details about the WeathercAIm (initial) model, make sure to check out this paper.

To train an ML model you need data. Unfortunately, for our first ML model, we didn’t have any pictures available from the vehicle fleet yet, as the limited data-gathering campaign would only be done later in the project. To already evaluate the feasibility of WeathercAIm for detecting the weather, an initial model was trained on the adverse weather dataset (AWD) public dataset. The initial model we trained seemed to perform quite well on the AWD dataset which indicated that the approach was feasible.

After the model is trained, we can use it to do actual weather classifications. A captured image is processed by the different layers of the CNN to come to a weather classification, as you can see in the GIF below. We already deployed this first initial model in production in the initially limited fleet for the field test and data gathering campaign.

Field test results

Initial results from the field test revealed that there were still some challenges and room for improvement in the accuracy on real-life production data. Some observations of the field test and data:

- The resolution of the camera doesn’t allow for high performance in changing lighting conditions, producing lesser quality images than the camera images from the AWD dataset.

- The environmental settings, ex. camera placement, urban scenes and weather conditions were different compared to the AWD dataset, which was taken in Canada.

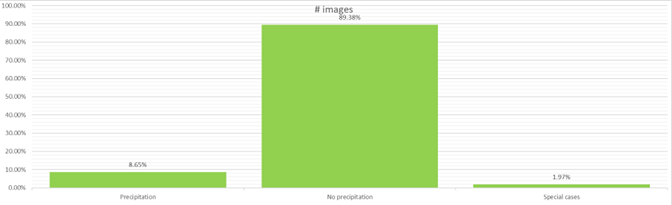

- Actual data was very imbalanced, with ‘no precipitation’ being an extreme majority class (see the dataset distribution figure below). The class distribution was also quite different compared to AWD. In general, such highly-skewed datasets are very challenging to get good modeling and predictions.

- For some rarer weather types, like hail, there was only a marginal number of images captured, which makes it hard to model them. The real-life data also included special (non-weather) cases, like tunnels, vehicles in the garage, etc.

- The weather condition on the images was sometimes very difficult to judge, but also for humans, especially in case of the lesser quality of images. This also made labeling of camera images challenging and tedious.

SARWS field test camera dataset distribution

After the data gathering campaign part of the field test, the predictions of the initial model have been back-tested on the fully captured and labeled field dataset. This showed that, on contrary to the AWD dataset, it didn’t perform well and achieved a much lower accuracy. This confirmed the problem with the worse image quality images, difficult-to-judge weather types and class imbalance and the need to update and retrain the WeathercAIm model after the development phase on this new ground-truth data.

As for any ML project, good quality and representative data is crucial to train the ML model!

To improve WeathercAIm to suit the actual field production conditions, a more data-centric approach was followed. To alleviate the skew for the modeling, the no precipitation class has been split into 3 classes (dusk/night, sunny, overcast), like in AWD. However, it was still the most occurring class and especially sunny, amounting to over 50% of the total dataset. (And Belgium really isn’t that sunny.) In addition, special cases like tunnels etc. were also added as new classes, and loss has been changed to focal loss, which is known to handle class imbalances better. Class weights were applied to loss during training to tell the model to “pay more attention” to samples from an under-represented class.

With these changes, the new model’s accuracy improved drastically. Not up to the level as on AWD, but this was expected also considering the image quality. Especially precipitation types remained difficult, also as the difference between them are hard to spot, even for humans.

So, what now?

During the SARWS project road trip, we learned and reaffirmed the mantra that good quality data is crucial for ML projects. This also means that the data used for training needs to be representative of the data used in production. Highly-imbalanced data can be a challenge, but there are ways to mitigate it. In any case, we’re happy that WeathercAIm could detect the weather classes with reasonably good accuracy.

Now that we know that AI can see the weather from pictures, what can we do with it? It could be used and applied in all kinds of different industries where having good local weather indication and prediction is crucial like agriculture, sports and recreation, mining, and construction. And in a more general context, combining AI with a camera could be used in many more computer vision tasks as long as you have good-quality data. Feel free to reach out if you want to learn about its opportunities for your project!

In SARWS itself, it was used as one of the sensor inputs for an improved road weather model and a driver app warning drivers about upcoming weather conditions. In the video below you can see all parts of all partners of the SARWS project coming together and how WeathercAIm fits in there.